- cross-posted to:

- technology@lemmy.ml

- cross-posted to:

- technology@lemmy.ml

Seemed a likely outcome. On the way to being late, there were stories where basically they spent ungodly amounts of money in an attempt and then scrapped it because it wasn’t actually any better. And that this happened multiple times.

So if they were truly stuck, what to do? They could admit they were stuck, and watch the economic collapse as investors realize they were mistaken on how far along the technology curve things were, or they could market the hell out of GPT-5 and pretend it’s amazing and hope enough suckers and latecomers to LLM buy into that narrative that it carries through. Like Sam Altman acting ‘scared’ of what GPT-5 is going to be, “what have we done?” in a very melodramatic way like he’s Oppenheimer or something, likening it to the Death Star (all in all, a very ‘wtf’ situation, if it were really as dangerous as you say, you seem awfully eager to get it going).

So we have an incremental iteration with some good, some bad, and perhaps overall better, but in the context of the ungodly investment in the LLM sector, it’s way way less than would should reasonably expect.

Sounds like the bubble could burst soon. It’ll be an epic collapse if it happens.

Gods I hope so.

No, the general economy is pretty poor right now, the AI collapse will cost lots of jobs, incomes and lives. A collapse doesn’t just affect that industry. It spreads and affects finance and lending globally.

🤞

Like Sam Altman acting ‘scared’ of what GPT-5 is going to be, “what have we done?”

They do this shit every release lmao.

I remember then GPT 4(? 3?) was close to release there were some very “organic” totally not paid for articles about GPT employees being afraid of the new model loooool.It’s been obvious, since early 2025, that they are chasing the next step change but not getting any closer to it. OpenAI is in a catastrophic financial position and it’s hard to imagine a scenario where it gets any better.

Ironically, I think only AGI could get them out of this pickle, but even they are starting to realize it’s not around the corner.

AGI might be just around the corner, or it might be indefinitely far off, but either way I don’t think “just more LLM” is going to get there, and that seems to be all the AI industry is really equipped to handle at the moment.

Ironically, getting to AGI might take a bubble pop to stop the current LLM architectures from just sucking up all the resources to let other approaches breathe a little.

More practically, I’d have expected to see more engaged robotics, but it seems all the money is being spent on pure online AI approaches.

Seriously. Neural networks can approximate literally any function, and the lumbering giants have all decided ‘what’s the next word?’ is the only function worth pursuing.

It’d take a sliver of their current budget to try starting over like it’s 2020. Compare with benchmarks that now look quaint. Enjoy some wisdom where previously they could only guess. Buuut nope: all LLM, all the time, and big big big.

I can’t wait for this shitty bubble to burst and having the CS job market recover.

Oh the CS job market may just be more persistently toast. Yes there have been layoffs attributed to AI, however I think a lot of those businesses were kind of itching to do those layoffs anyway. There was way overhiring in the

securitysector in general, plus when the AI bubble pops it’ll drag the test if the tech sector with it.I’m a pentester for a company that exclusively pentests mature corps.

They are not spending jack shit on security in most cases.

If I find publicly facing critical IDORs in your 100+ year old company, you clearly need to get your shit together.

Keyboard substituted the wrong word, fixed.

A collapse will lead to job losses in the short term.

The longer it is dragged out the more jobs will be lost when the bubble bursts.

True, but I still don’t hope for a collapse. A soft landing is better, if unlikely.

There is zero chance of a soft landing.

There are degrees of collapse too. I don’t mind if all the billionaires and corporations are left holding the bag. I am concerned that we have a collapse of the stock market and lost jobs and recession globally.

Please don’t get my hopes up 😭

“We are now confident we know how to build AGI as we have traditionally understood it”

Someone should go and ask him what is his traditional understanding of AGI is. He will likely come up with something cheesy and generic like “a computer which can solve any problem a human can, better and faster” without realizing the depth of this concept.

Every time they release a new model they do this “omg we’re so close to AGI bro” rigmarole ffs

AGI is when you ask the computer a question and it blanks you because it doesn’t like you.

just gives an exhausted sigh in response to every query.

Then ghosts you every time.

Meanwhile, the chinese and other open weights models are are killing it. GLM 4.5 is sick. Jamba 1.7 is a great sleeper model for stuff outside coding and STEM. The 32Bs we have like EXAONE and Qwen3 (and finetuned experiments) are mad for 20GB files, and crowding out APIs. There are great little MCP models like Jan too.

Are they AGI? Of course not. They are tools, and that’s what was promised; but the improvements are real.

Turns out, closed source tech bro corporate enshittification is not a sustainable plan. Who knew…

Did you miss that open ai released the oss model few days prior to gpt5?

Larger model: https://huggingface.co/openai/gpt-oss-120b Smaller model: https://huggingface.co/openai/gpt-oss-20b

They seem to be quite good

Not saying that openai would be the good guys here, but I believe they are realizing that they are behind on local models.

Nah, I tried 20B and a bit of 120B. For the size, they suck, mostly because there’s a high chance they will randomly refuse anything you ask them unless it STEM or Code.

…And there are better models if all you need is STEM and Code.

Look around localllama and AI communities, they’re kinda a laughing stock even more than Llama 4.

I haven’t played with it too much yet but Qwen 3 seems better than GPt-OSS

In my limitrd testing it seemed relatively trigger-happy on refusals and the results were not impressive either. Maybe on par with 3.5?

Although it is fast at least.

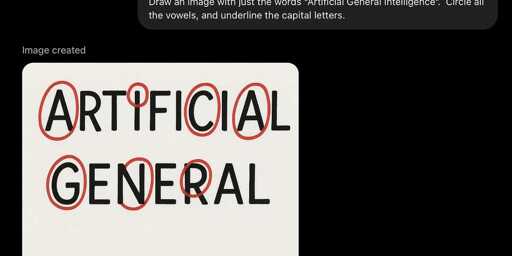

why do everybody want to make it count letters and stuff like this? isnt this what its worst at? im against ai but this seems like it is being used for what its worst at and not designed to do, like checking how good a bike is by trying to ride it underwater

It’s a quick way to say that the emperor still has no clothes.

We’re boiling the oceans to train the models, and these are well-publicised failure modes. If they haven’t fixed it, it seems to suggest they CAN’T fix it with the tools and architecture they have. So what other problems is it whiffing on that aren’t trivially checkable?

If marketing boxed in the product and said “it does these ten things well”, we might be willing to forgive limitations when we leave its wheelhouse. Nobody kvetches that Microsoft Word is an awful IDE, after all. But that would require a retreat from a public that’s been promised Lt. Cmdr. Data in your pocket, and investors that have priced it as such.

OpenAI, because GPT-5 has only improved in terms of coding so as not to fall behind Anthropic. In addition, it does not consume more resources than its previous models.

In other aspects, there has been no improvement, it has declined slightly, or the improvement has been minimal. Does this mean that OpenAI and the sector are going to collapse?

Probably not, because there were only problems with the launch of this model. In the coming months, they will launch another model that solves this, although it may not.

It may not consume more resources, but the older models were already consuming way too many resources to pump out their bullshit machines

deleted by creator

I wouldn’t be surprised if they’d just wrote a script for it to use in the back specifically for this sort of question in the future and call it a day. Just to shut up the critics.

They seem to already be doing that as examples come up like glue on pizza, but there are a nearly infinite number of similar issues that are going to need scripting.

You shouldn’t confront a bullshitter when you catch it in the act!

Al seems to know everything, until it’s a topic where you have firsthand knowledge

why do everybody want to make it count letters and stuff like this?

Dunno about the others; I do it because it shows well that those models are unable to understand and follow simple procedures, such as the ones necessary to: count letters, multiply numbers (including large ones - the procedure is the same), check if a sequence of words is a valid SATOR square, etc.

And by showing this, a few things become evident:

- That anyone claiming we’re a step away from AGI is a goddamn liar, if not worse (a gullible pile of rubbish).

- That all talk about “hallucinations” is a red herring analogy.

- That the output of those models cannot be used in any situation where reliability is essential.

It’s a chatbot that can see and draw, but rich idiots keep pushing it as an oracle. As if “a chatbot that can see and draw” isn’t impressive enough.

Three years ago, ‘label a tandem bicycle’ would’ve produced a tricycle covered in squiggles. Four years ago it was impossible. I don’t mean ‘really really hard.’ I mean we had no fucking idea how to make that program. People have been trying since code came on punchcards.

LLMs can almost-sorta-kinda do it, despite being completely the wrong approach. It’s shocking that ‘guess the next word’ works this well. I’m confused by the lack of experimentation in, just… asking a different question. Diffusion’s doing miracles with ‘estimate the noise.’ Video generators can do photorealism faster and cheaper than an actual camera.

The problem is, rich idiots claim this makes it an actual camera. In that context, it’s fair to point out when a video shows the Eiffel Tower in Berlin. It’s deeply impressive that computers can do that, now. But we can’t let it guide people’s vacation plans.

Because they are promoted as being able to do anything when jammed into everything.

why do everybody want to make it count letters and stuff like this?

We do not applaud the tenor for clearing his throat, as they say; yes. But we also do not applaud the tenor who can’t even do that.

This particular model was advertised as being able to use tools, like calculators. And it includes a calculator tool in the package.

So, it should in theory, not have the probabilistic limitations of its native algorithms.

However, there are still the limitations created by multiple posts, where the model will change its answer in relation to previous inputs by users. This may have become even worse in model 5 because it supposedly remembers pervious conversations.